Banking on GenAI | Fintech Inside - Edition #76 - 5th June, 2023

Generative AI has taken the world by storm! This two-part series covers the basics of Generative AI and its applications in financial services.

Hi Insiders, I’m Osborne, Principal at Emphasis Ventures (EMVC).

Welcome to the 76th edition of Fintech Inside. Fintech Inside is the front page of Fintech in emerging markets.

Generative AI has taken the world by storm! Thanks to OpenAI's ChatGPT, everyone's talking about using GenAI in their everyday lives. When it launched, I was a little skeptical of the technology andd its applications in financial technology. As I learned more about GenAI from a first principals basis, the more excited I am about the potential of the tech.

In this two-part series, we'll cover the absolute basics of Generative AI, how did we get here, what are some tangible applications of GenAI in financial services and what are some of the limitations of the technology.

As with all other editions of Fintech Inside, I try to explain the most important aspects of GenAI without getting into the technical details. The next edition of Fintech Inside will detail the tangible applications of GenAI in financial services.

Enjoy another great week in fintech!

Raising funding for your early stage fintech startup? reach out to me at os@em.vc

🤔 One Big Thought

Banking on GenAI: Generative AI and Potential Applications in Financial Services - Part 1

In Apr, 2016, I wrote a post on artificial intelligence on my personal blog. The post was about why I believed artificial intelligence should takeaway some of our jobs. But that's not the topic of this edition of Fintech Inside. This edition of Fintech Inside will cover two topics:

Part 1: What is Generative AI and how did we get here?

Part 2: What are the tangible applications of Generative AI in financial services?

The full post ended up becoming too long so I've broken the piece into two parts as above. As with most of my editions, I'm writing these editions to inform my own understanding and thinking on GenAI

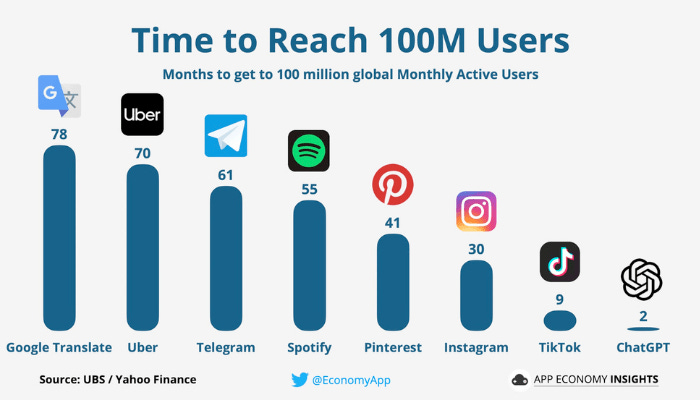

ChatGPT was launched on 30th November, 2023 and soon caught the world's imagination. Within a short two months, it made history when it reached 100mm registered users on its platform

ChatGPT itself is an application of a recent sub-field of the broader discipline of Artificial Intelligence (AI) called Generative Artificial Intelligence or GenAI.

Let's backtrack a little bit, what is AI? In my blog post from Apr, 2016, I tried looking for an appropriate description of AI and found the following:

The Oxford dictionary defines it as such: Artificial Intelligence: The theory and development of computer systems able to perform tasks normally requiring human intelligence, such as visual perception, speech recognition, decision-making, and translation between languages.

This definition, according to me, is a very pedantic on what AI truly is and does. I prefer to use the definition below, coined by Claudson Bornstein, Assistant Professor, Computer Science, The Federal University, Rio De Janiero, Brazil cited from Eugene Fink's university page.

Artificial Intelligence: The Study of Common Sense

A good analogy for AI is studying and applying algorithms to teach computer systems, what is to us humans, common sense. That's been my go to analogy to understand this world of AI. It's not perfect, but going by this same analogy, if AI is like a new born baby, GenAI is a grown up child (~5-12 years old).

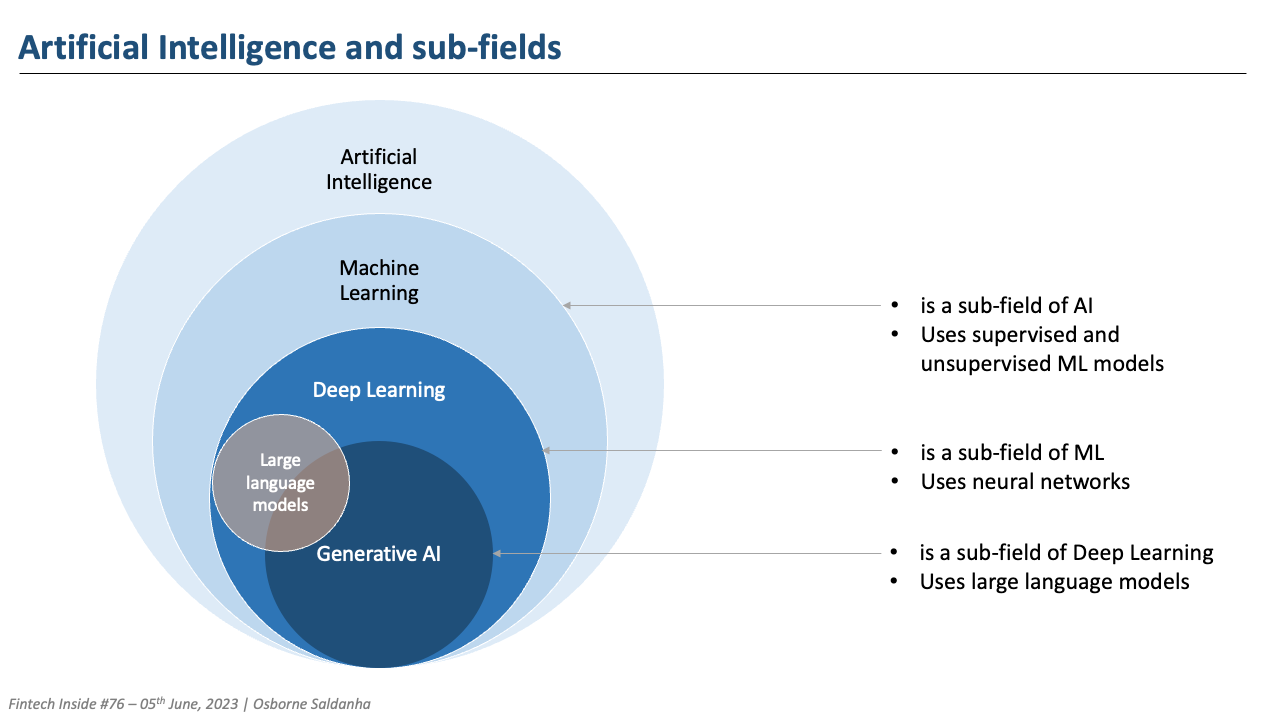

I say it's not perfect because AI is a whole discipline in itself. GenAI is a sub-field within AI that's evolved over the years from the development of other sub-fields of Deep Learning, which is a sub-field of Neural Networks, which itself is a sub-field of Machine Learning and that forms the entirety of what we know about the field of AI.

GenAI builds off of technologies such as Generative Adversarial Networks (GANs) developed in 2014, Transformers developed by Google in 2017, and Contrastive Language-Image Pre-training (CLIP) developed by OpenAI in 2021. GenAI broke out in 2022 as models became much more performant and cost effective to train and serve, and their output improved considerably. The massive jump in performance to generalize across language tasks that GenAI are not specifically trained on, came not just from better algorithms (the neural network invented by Google in 2017, called a Transformer, played a huge role here), but from sheer size as well.

All of that can get complicated real fast for the non-technical among us. Here's an easier way to understand it. Think of Deep Learning as a field of algorithms that help "predict" a certain outcome or "classify" a data set - called Discriminative. Deep Learning algorithms mimic the individual neurons in the human brain to learn from labeled or unlabeled datasets and gives out an absolute output. Deep learning algorithms perform a specific task. For example: I train a Discriminative model (e.g. recurring neural network) on a bank's stock performance for the past 5 years and ask it what will be the bank's stock price a year from now? Based on the training data, the model will predict the likely stock price.

Generative AI on the other hand is a field of algorithms that help "generate" new content (audio, image, text etc.). It takes in certain input (prompt) to find the probability of the most likely output based on the training data (labeled, unlabeled or semi-labeled) and generates the response. But here's the thing, unlike Deep Learning where a specific output is received, GenAI understands the distribution of data and learns the patterns even in unstructured content. It then generates the most likely output in the sequence as it goes i.e. either word-by-word or token-by-token or other methods. But the output is probabilistic and generative. For example: I train a Generative model (e.g. large language model) on the collective works of Shakespeare and ask it to respond to my future text messages in Romeo's style. Based on the training data, if I receive a text asking "how are you?", the model will give an output "Verily, I traverse life's stage with fortitude and grace". The output will be different every time I generate a response because it the model is probabilistic.

How did we get here? The inflection point that truly set the ball rolling for Generative AI is the invention of the Transformer by Google researchers in 2017. The Transformer was initially invented to improve efficiency and accuracy of language translation. Even though the researchers didn't do a great job of highlighting the potential impact this invention would have on the field of AI (they probably didn't realise it themselves), the community was quick to implement it. Today, from what I understand, all large language models implement Transformers. Transformer is what T in GPT stands for - Generative Pre-Trained Transformer.

At a high level, a Transformer model learns contextual relationships between words in a sentence. It achieves this learning by using a mechanism called self-attention, which allows the model to weigh the importance of different words in a sequence based on their context. This method is in contrast to traditional recurrent neural network (RNN) models, which process input sequences sequentially and do not have a global view of the sequence. This video by Google explains Transformers really well.

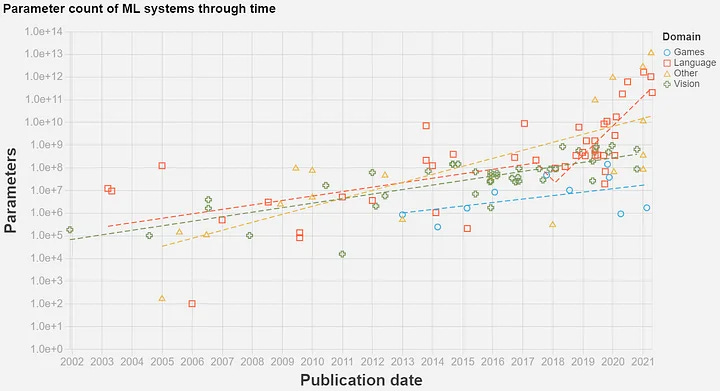

The invention of the Transformer allowed neural network models to scale massively in size while being more efficient. As per MIT, "What does it mean for a model to be large? The size of a model—a trained neural network—is measured by the number of parameters it has. These are the values in the network that get tweaked over and over again during training and are then used to make the model’s predictions. Roughly speaking, the more parameters a model has, the more information it can soak up from its training data, and the more accurate its predictions about fresh data will be."

GPT-3 has 175bn parameters. Jurassic-1, a commercially available large language model launched by AI21 Labs in September, has 178bn parameters. Gopher, a new model released by DeepMind in December, has 280 billion parameters. Megatron-Turing NLG has 530 billion. Google’s Switch-Transformer and GLaM models have 1tn and 1.2tn parameters, respectively. The largest LLM is estimated to be from GPT-4 by OpenAI. Parameters are nodes in the neural network. By comparison, the best deep learning models before the age of Transformers had a few million parameters. Another crude comparison is that our brain is estimated to have 86bn neurons and 10trillion synapses.

Transformative invention! Where do we go from here? GenAI is still in its early days. The technology already generating exceptional outputs that are actually usable. It's applications are still evolving and are at the bleeding edge of technology. There's no doubt that OpenAI forced us to reimagine the possibilities of GenAI and more importantly, OpenAI forced other companies to fast track their GenAI use cases in the public domain. Large Language Models are only going to scale larger, GPU's are only going to get cheaper, some researcher will invent a more efficient model to make generative and specific outputs possible, you'll be able to use LLM's on your smartphone and other edge devices and entrepreneurs are going to find better use cases for GenAI. It's bound to happen. This is a good thing.

But, you're not here because this is a tech newsletter. You're here because this is a fintech newsletter - and I'll get to the fintech part in the next edition. I wanted to take this entire edition to make sure you and I understood what GenAI is and what it isn't. This was an attempt at understanding GenAI from a first principals perspective. I hope this edition achieved that outcome.

The next edition of Fintech Inside will cover the GenAI in financial services, tangible use cases of, benefits and limitations of the technology and much more. Subscribe to the newsletter to ensure you get the edition.

Will leave you with this podcast interview of Kevin Scott, CTO of Microsoft. The section of the video I want you to see starts from 39:17 till 41:33. This line he said resonated with me a lot, "There is no historical precedent where you get all of these beneficial things (technological innovations) by starting from pessimism first. Pessimism doesn't get you to optimistic outcomes".

1-min Anonymous Feedback: Your feedback helps me improve this newsletter. Click UPVOTE 👍🏽 or DOWNVOTE 👎🏽

✨ Singapore Fintech Living Room presented by AWS

Join us and other Singaporean founders, senior operators and investors at the Fintech Living Room! This will be the best of Southeast Asian fintech ecosystem under one roof.

Thanks to our sponsors: AWS, Carta, Saison Capital and Aspire.

We are hosting a fireside Q&A (Chatham House Rules) to get candid about the state of fintech today. Space is limited at the venue, so register now!

🌏 International

Diebold Nixdorf is seeking bankruptcy protection to stay afloat. Mass Mutual Life Insurance Company acquired a majority interest in Counterpointe Sustainable Advisors, a provider of creative energy solutions financing. Card fraud in Europe declines significantly to 0.028%. British neobank Monzo achieved profitability while reducing its annual losses. Revolut surpasses 30 million customers worldwide. The Saudi Central Bank permitted Umg Alholol Trading Co. and Drahim App to test their open banking solutions in its regulatory sandbox.

🏷️ Other Notable Nuggets

🎵 Song on loop

Fintech updates can get boring, so here's an earworm: Up for some excellent Pakistani rap? This song Happy Hour by Talha Anjum (Youtube / Spotify) is super tight. It has some Urdu, some Hindi, some English and banger vibes.

👋🏾 That's all Folks

If you’ve made it this far - thanks! As always, you can always reach me at connect@osborne.vc. I’d genuinely appreciate any and all feedback. If you liked what you read, please consider sharing or subscribing.

1-min Anonymous Feedback: Your feedback helps me improve this newsletter. Click UPVOTE 👍🏽 or DOWNVOTE 👎🏽

See you in the next edition.